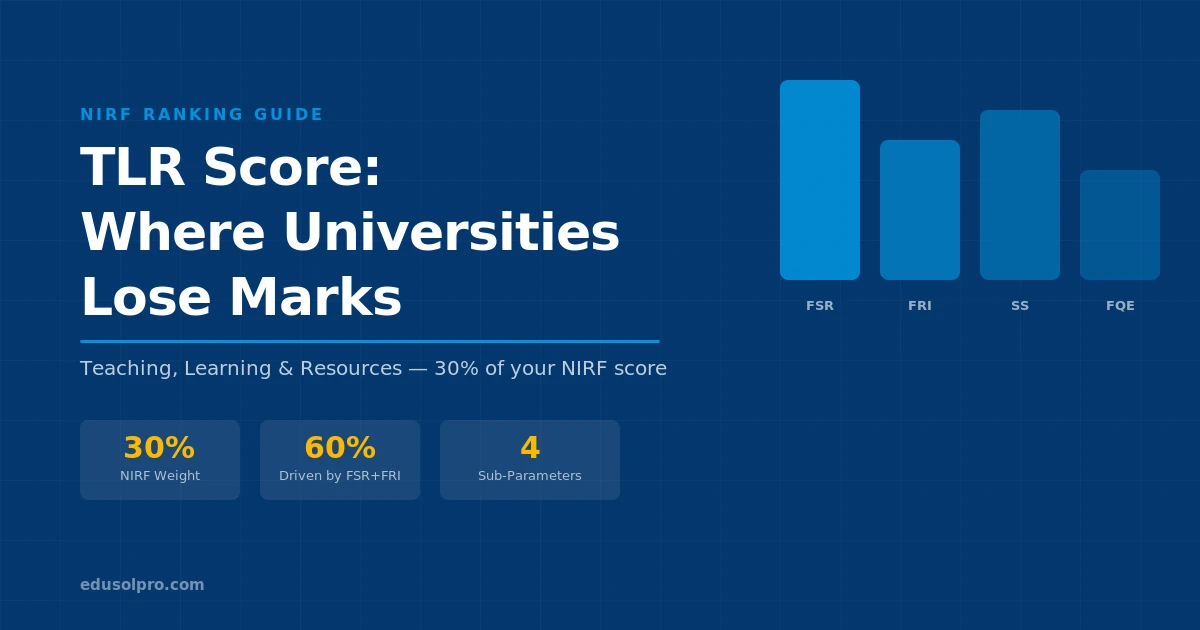

The Teaching, Learning and Resources (TLR) parameter carries 30% of your NIRF score, equal weight to Research and more than Graduation Outcomes, Outreach, or Perception. Most universities treat TLR as the “infrastructure” parameter and stop there.

That’s a mistake. TLR isn’t primarily about buildings. It’s about the quality and completeness of your institutional data and how NIRF counts faculty credentials, student numbers, and spending.

This article breaks down each TLR sub-parameter, shows where institutions consistently lose marks, and gives you a realistic 12-month plan.

What TLR actually measures

TLR has four sub-parameters, each with its own weight within the 100-point TLR score:

The first thing to notice: FSR and FRI together account for 60% of the TLR score. Both are heavily data-dependent. Getting the numbers right — not inflating them, not missing legitimate entries — is where institutions leave the most marks.

The sanctioned vs. enrolled trap

Student Strength (SS) is where universities make their first error.

NIRF doesn’t count just enrolled students against a fixed denominator. The formula compares actual student strength to sanctioned intake and computes a normalised score against peer institutions. If your actual enrolment is significantly below sanctioned intake, your SS score suffers, not because you have fewer students, but because NIRF interprets low fill rates as a signal of weakness.

What universities get wrong: they report only the current year’s enrolment without including PhD scholars who are still registered but haven’t submitted. They also often omit distance-mode students, part-time students, and students in affiliated programmes that legitimately belong in the count.

What to do: Pull a complete headcount from your student records system. Include every category NIRF recognises — UG, PG, PhD (enrolled, not just awarded), lateral entry, integrated programmes. Cross-check against your last NIRF DCS submission and identify where numbers were underreported.

Faculty–Student Ratio: the calculation that surprises most institutions

FSR is the most misunderstood TLR sub-parameter.

The common assumption is that FSR = total students ÷ total faculty. NIRF’s formula is more specific. It counts only permanent faculty with regular appointment. Visiting faculty, adjunct faculty, and guest lecturers don’t count, or count at a steeply discounted rate depending on the discipline and NIRF category.

Faculty with PhDs are weighted higher than those without. In disciplines where the PhD isn’t standard (some fine arts, design, or professional programmes), this can hurt institutions unfairly, but the methodology is fixed. The response has to be strategic hiring.

What to audit right now:

- Pull every faculty member’s appointment letter. Identify who is genuinely on regular payroll vs. contract or visiting.

- Check which of your permanent faculty hold PhDs. Run this against your DCS entry. Gaps here are common because HR records and academic records often aren’t synchronised.

- Calculate your real FSR before submitting. If it’s materially different from last year, understand why before NIRF asks you to explain it.

PhD faculty percentage: a 2–3 year problem, not a 1-year fix

NIRF rewards institutions where a higher proportion of faculty hold PhDs. This is a long-horizon metric — you can’t hire PhD faculty overnight, and established faculty can’t complete doctorates in a year.

What you can do in the short term: Identify faculty members currently enrolled in part-time PhD programmes at recognised universities. Make sure they’re listed correctly in your DCS submission with their enrolment status. Completing a PhD while employed is counted differently than being ABD (all-but-dissertation), but enrolment in a recognised programme is trackable.

What you can do in the medium term (12–24 months): Build a policy that prioritises PhD holders in all new faculty recruitment. Even replacing one or two contract faculty with permanent PhD faculty changes your ratio meaningfully. Sponsoring existing faculty for part-time PhDs at affiliated universities is also legitimate and auditable.

What not to do: Don’t count faculty as PhD-holding based on submitted theses that haven’t been formally awarded. NIRF counts awarded PhDs, not submitted ones. This is a common source of discrepancies between what institutions believe their score should be and what NIRF calculates.

Financial Resources and Utilisation: why unspent budgets hurt you

FRI is the sub-parameter institutions most frequently misunderstand.

NIRF doesn’t just measure how much money an institution spends. It measures the ratio of actual expenditure to sanctioned budget. An institution with a large approved budget that spends 60% of it scores worse than an institution with a smaller budget that spends 95%.

This creates a counterintuitive situation: universities that receive large government grants but have slow disbursement processes can be penalised in rankings, even when financially strong.

The expenditure categories NIRF counts:

- Capital expenditure on infrastructure (buildings, equipment, labs)

- Library and IT resources

- Faculty salaries and development programmes

- Research and development expenditure

- Student welfare and scholarships

What typically gets missed: expenditure on items that aren’t mapped to the right DCS category. Infrastructure spending categorised as “administrative” in your accounts, faculty development under “training” rather than “academic expenditure”. These are categorisation errors that reduce your apparent utilisation.

The FRI audit checklist:

- Get your last three years of audited accounts and map each expenditure line to NIRF categories

- Identify which budget heads have consistent underspending — this is where your disbursement process needs fixing

- Check if infrastructure projects in progress count as “committed expenditure” under your DCS system — some do, but the documentation requirements are strict

Infrastructure metrics: what NIRF actually counts

Infrastructure is part of FRI, not a separate sub-parameter. NIRF looks at total infrastructure investment relative to student strength and programme diversity.

Common errors:

- Listing infrastructure assets at original purchase price rather than current replacement value (NIRF wants current replacement value for fixed assets)

- Not counting shared facilities (libraries, labs, sports) that serve multiple departments

- Missing digital infrastructure — servers, licensed software, LMS platforms all count

One area consistently underreported: library resources. Physical books are counted, but licensed e-databases (JSTOR, Scopus, Emerald, Wiley) are frequently not mapped to the correct DCS field. These subscriptions represent significant expenditure and carry real weight.

A 12-month TLR improvement roadmap

- Reconcile faculty records (HR) against academic records — identify PhD gaps

- Reconcile student headcount against DCS fields — find underreported categories

- Map last year's accounts to NIRF expenditure categories — find misclassified spend

- Review visiting vs. permanent faculty split — identify data vs. reality discrepancies

- Start the process of regularising contract faculty on permanent roles where possible

- Launch faculty PhD sponsorship programme — link with local university for part-time PhD

- Fix accounts mapping — reclassify expenditure items to correct NIRF heads with CA sign-off

- Accelerate pending infrastructure projects to ensure expenditure hits this FY

- Prepare TLR data file: appointment letters, salary evidence, degree certificates for each faculty

- Compile infrastructure asset register with replacement values

- Prepare e-resource subscription documentation with spend figures

- Prepare PhD enrolment certificates for faculty currently in programmes

- Enter TLR data with full supporting documents for each field

- Have the IQAC coordinator cross-check every entry before final submission

- Don't extrapolate or estimate — NIRF audits sample entries; use only verifiable numbers

FAQs

Does visiting faculty count towards FSR at all? Visiting and adjunct faculty count at a fraction of the weight of permanent faculty. The exact treatment varies by discipline and whether they hold regular visiting appointments with documented contracts. For most institutions, the safe assumption is that visiting faculty contribute minimally to FSR — focus your efforts on permanent faculty data accuracy.

Can we count faculty who submitted PhDs but haven’t received degrees yet? No. NIRF counts formally awarded PhDs only, not submitted or under-examination theses. Including submitted-only PhDs is a common error that gets caught during verification and can result in score adjustments.

What happens if our actual student strength is far below sanctioned intake? Your SS sub-parameter score will be lower than an institution at full capacity. The practical approach: ensure every eligible student category is counted (PhD scholars, part-time, lateral entry, integrated programme students). Don’t manufacture enrolment, but don’t miss legitimate students either.

How does NIRF verify financial expenditure? NIRF relies on your audited annual accounts and the specific expenditure schedules within them. Auditor-signed documents are the only accepted proof. Internal budget summaries or management accounts are not accepted as primary evidence.

Our FRI score dropped even though we spent more this year. Why? FRI is a ratio, not an absolute number. If your sanctioned budget increased significantly but your actual spend grew at a slower rate, the utilisation ratio falls. Check whether budget revisions late in the year inflated your sanctioned amount without corresponding actual expenditure.

TLR is correctable. Most of the marks lost here aren’t because of genuine institutional weakness — they’re lost to data categorisation errors, underreported headcounts, and faculty records that don’t match what HR and academics track separately. Fix the data first, then build the structural improvements. For official NIRF parameter specifications and methodology updates, refer to the official NIRF website.

For the other four NIRF parameters, read: